Show HN Today: Discover the Latest Innovative Projects from the Developer Community

ShowHN Today

ShowHN TodayShow HN Today: Top Developer Projects Showcase for 2025-08-28

SagaSu777 2025-08-29

Explore the hottest developer projects on Show HN for 2025-08-28. Dive into innovative tech, AI applications, and exciting new inventions!

Summary of Today’s Content

Trend Insights

Today's projects show a clear trend: developers are actively integrating AI to improve existing workflows and build new applications. The focus on local processing and data privacy is gaining traction, demonstrating a shift towards more user-centric and secure applications. The emergence of tools that simplify complex tasks, from code review to data analysis, indicates a strong demand for enhanced developer productivity. The success of projects built with AI highlights the importance of embracing AI in future developments. Developers and entrepreneurs should focus on leveraging AI to solve real-world problems. The emphasis on open-source and community-driven solutions is crucial for fostering innovation and collaboration. Dive into the latest AI models, create tools that integrate with existing workflows, and never stop experimenting—the possibilities are endless. Don’t be afraid to build for yourself and share what you learn; it’s in the spirit of hacking that real progress is made.

Today's Hottest Product

Name

SwiftAI – open-source library to easily build LLM features on iOS/macOS

Highlight

SwiftAI 通过一个模型无关的 API 简化了在 iOS 和 macOS 上使用 LLM 的过程。它在设备端 LLM(如 Apple 提供的)和云端模型之间无缝切换,开发者无需编写冗余代码。核心创新在于其模型无关的 API 和对本地 LLM 的优先支持,解决了在不同设备上运行 LLM 的兼容性问题。 开发者可以学习如何利用本地 LLM 提升应用隐私性、降低 API 调用成本。 通过这个库,开发者能更灵活地利用本地 LLM,无需为不同设备编写不同的代码分支,加速 LLM 功能的开发。

Popular Category

AI应用

开发工具

iOS/macOS开发

安全工具

数据分析

开源工具

Popular Keyword

LLM

AI

Swift

Open Source

API

安全

Technology Trends

本地优先的AI应用开发:开发者开始关注在本地设备上运行 AI 模型,以提高隐私性和降低成本。如SwiftAI,Grammit的本地化处理

AI赋能的应用开发: AI 技术的快速发展,为各类应用带来了新的可能性。如:基于AI的文法检查,AI图片编辑等。

多模态数据处理与分析: 例如 VAERS DuckDB, 对不同来源的数据进行整合分析,提供了更全面的数据洞察。

无代码/低代码工具的兴起: 利用GPT和其他技术,创建低成本/零成本的工具

Project Category Distribution

AI应用 (35%)

开发工具 (30%)

生产力工具 (15%)

安全工具 (10%)

其他 (10%)

Today's Hot Product List

| Ranking | Product Name | Likes | Comments |

|---|---|---|---|

| 1 | SwiftAI: Unified LLM Access for Swift Developers | 63 | 15 |

| 2 | Duebase AI: Instant UK Company Financial Health Analysis | 15 | 29 |

| 3 | Private LLM Subscription: Your Privacy-Focused AI Companion | 21 | 13 |

| 4 | Grammit: Local LLM-Powered Grammar Guardian | 26 | 4 |

| 5 | Yoink AI: Context-Aware AI Text Editor for macOS | 19 | 8 |

| 6 | MCPcat: Effortless Observability for MCP Servers | 13 | 3 |

| 7 | GrowChief - Open-Source Social Media Outreach Tool | 8 | 2 |

| 8 | Runcell: An AI Agent for Jupyter Lab | 8 | 1 |

| 9 | oLLM: Optimized Large Language Model Inference for Consumer GPUs | 3 | 6 |

| 10 | Linkfy: On-Device URL Cleaner | 3 | 4 |

1

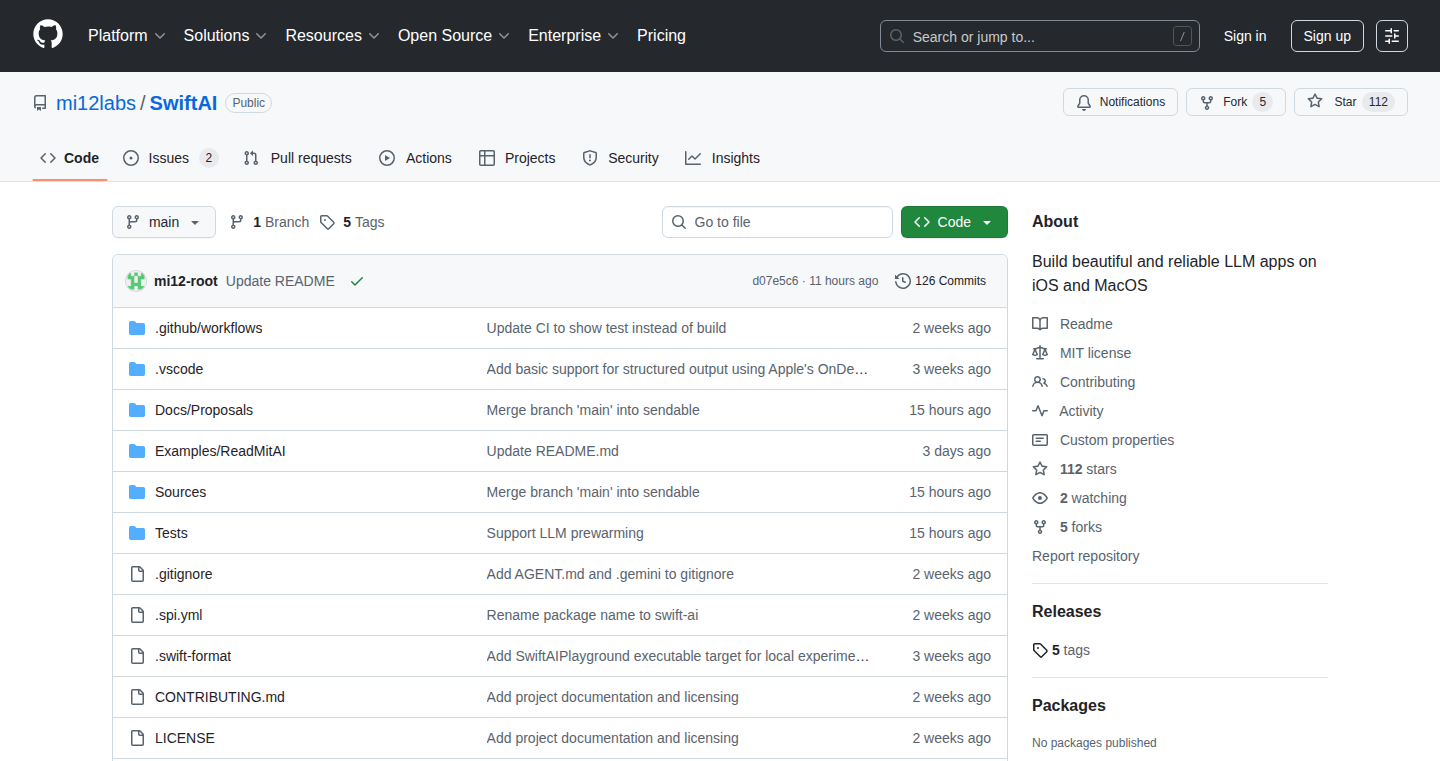

SwiftAI: Unified LLM Access for Swift Developers

Author

mi12-root

Description

SwiftAI is an open-source Swift library that simplifies the integration of Large Language Models (LLMs) in iOS and macOS applications. It cleverly switches between Apple's on-device LLMs (when available) and cloud-based models, all while keeping your code clean and consistent. This eliminates the need for developers to write separate code paths for local and cloud LLMs, offering a unified API for LLM functionalities. The project aims to provide a streamlined experience for developers to use LLMs regardless of the underlying infrastructure, improving privacy, reducing cost, and enhancing user experience. The core innovation lies in its ability to abstract away the complexities of LLM deployment, enabling developers to easily incorporate powerful AI features into their applications with minimal effort.

Popularity

Points 63

Comments 15

What is this product?

SwiftAI is a Swift library that provides a single, easy-to-use interface for accessing LLMs. It cleverly handles the complexities of using both on-device and cloud-based LLMs. When an Apple device’s local LLM is available, it uses that. If it’s not available (due to device limitations or other reasons), it seamlessly falls back to a cloud-based LLM. So, it means you can build your apps with LLM features once, and SwiftAI takes care of the details of choosing the best LLM for the situation, creating a unified and efficient approach to LLM implementation. This includes features like a single, model-agnostic API, an agent/tool loop, structured outputs, and optional chat state. This approach improves privacy, reduces cost, and enhances the overall user experience.

How to use it?

To use SwiftAI, developers simply import the library into their Swift project and use its unified API to interact with LLMs. The library takes care of selecting the appropriate LLM (on-device or cloud-based) based on device capabilities and availability. Developers define the LLM they want to use and SwiftAI handles the rest. For example, the project offers an example that lets you easily ask an LLM to write a haiku. This approach reduces the code needed to create LLM-powered features. You can use the provided example code to start with the basic functionality, which can be expanded with more complex features such as agent loops and structured outputs.

Product Core Function

· Unified API: This allows developers to use LLMs without needing to worry about the underlying infrastructure (on-device or cloud). The SwiftAI library handles the switching, meaning less code and less hassle. It simplifies LLM integration, so you can quickly incorporate AI-powered features into your applications.

· Agent/Tool Loop: SwiftAI can be used to create AI agents that perform multiple tasks, using various tools, without requiring code modifications. This unlocks the potential for more dynamic and complex interactions, building powerful AI-powered features like chatbots, task automation, and intelligent assistants within your apps.

· Strongly-typed Structured Outputs: Enables structured responses from LLMs, so data is parsed correctly and easier to manage. This simplifies data handling, making it easier to extract information and integrate it into your app. This improves data extraction accuracy, allowing for cleaner code and easier management of LLM responses.

· Optional Chat State: Provides the ability to maintain a chat history, allowing for more engaging and context-aware interactions with the LLMs. This improves user engagement by creating more natural and responsive AI-powered features, such as chatbots, virtual assistants, and conversational interfaces within your applications.

Product Usage Case

· Building a Privacy-Focused Chatbot: Developers can use SwiftAI to build a chatbot that prioritizes user privacy by using on-device LLMs when available, and using cloud-based LLMs only as a fallback. This ensures that user data stays on the device whenever possible. The unified API allows for easy switching between models, which enhances user privacy and maintains a consistent experience.

· Creating an Offline-Capable Assistant: SwiftAI can be used to integrate an intelligent assistant into an app that can perform tasks even without an internet connection. When the device is offline or the local LLM is accessible, the assistant uses the local LLM. This makes the app more reliable and user-friendly in areas with poor or no internet connectivity. By prioritizing on-device models, the app remains functional, enhancing user experience.

· Developing a Content Generation Tool: Developers can create an app that uses LLMs to generate content, such as articles, summaries, or creative text. SwiftAI's unified API simplifies the development process by abstracting the underlying LLM, which simplifies content generation, making it easier to generate and integrate diverse content formats into applications.

2

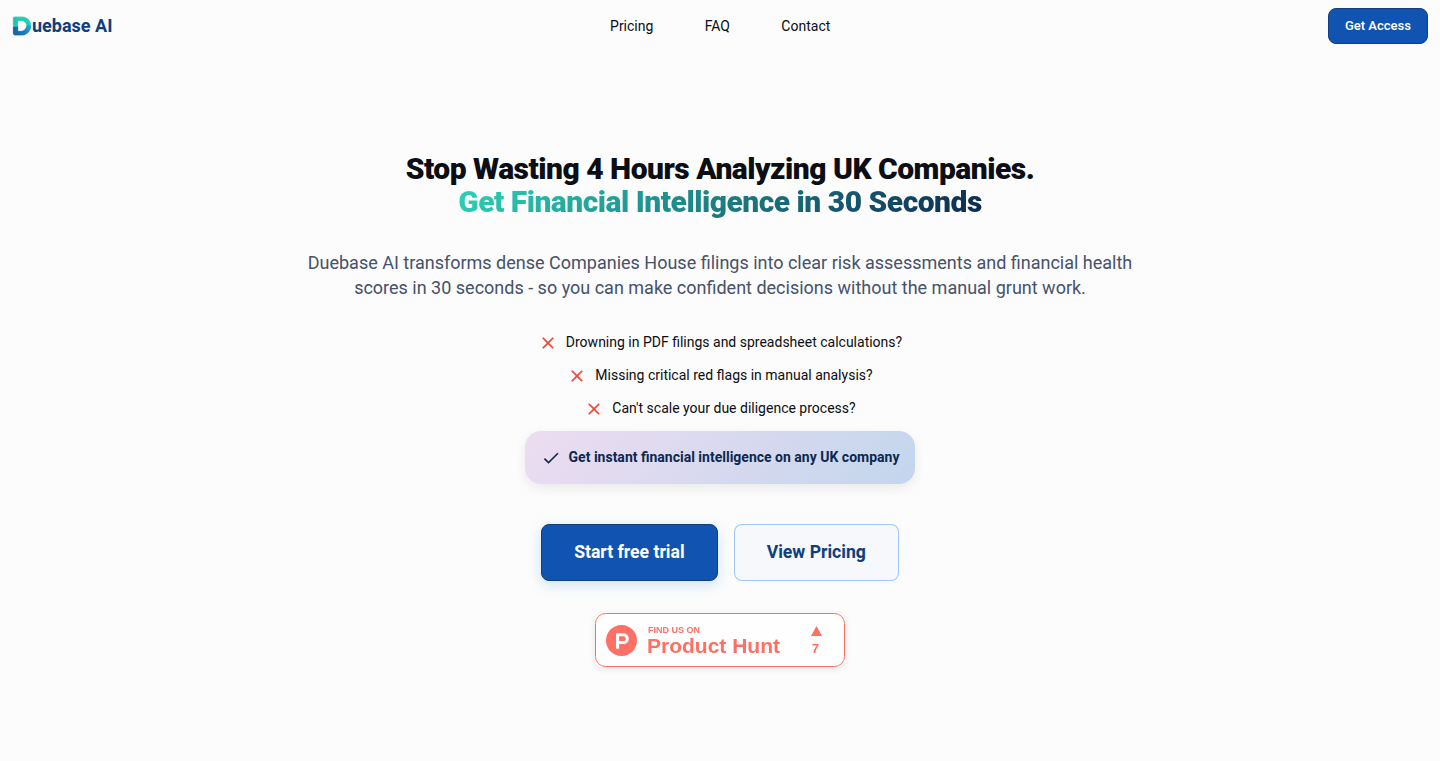

Duebase AI: Instant UK Company Financial Health Analysis

Author

superproton

Description

Duebase AI is a tool that uses Artificial Intelligence to instantly analyze the financial health of UK companies. It solves the problem of time-consuming financial analysis, which typically involves manually extracting data from PDF filings and interpreting trends. The core innovation lies in its ability to automatically parse, extract, and normalize data from messy and inconsistent UK company filings, generating health scores and explanations in plain English. This saves hours of manual work and requires no prior financial expertise.

Popularity

Points 15

Comments 29

What is this product?

Duebase AI uses Machine Learning models to understand and extract financial data from the complex and varied formats of UK company filings from the Companies House API. It standardizes this data, calculates key financial ratios like liquidity and profitability, and generates a health score. This involves handling data inconsistencies, cleaning the data, and understanding UK accounting standards. The innovation is the automation of the traditionally manual and expertise-heavy financial analysis process, making it accessible and fast. So this means I can quickly understand the financial health of a UK company without needing to be a finance expert.

How to use it?

Developers can access the financial health analysis through an API or web interface. The API allows for integration into existing financial systems, such as risk assessment tools, due diligence processes, or portfolio management platforms. It's particularly useful for anyone who needs to quickly assess the financial standing of UK companies, such as investors, lenders, or business analysts. You can integrate it to automatically monitor the health of your clients or investments and get alerted about significant changes. So this means I can automate my due diligence and risk assessment processes.

Product Core Function

· Automated Data Extraction and Normalization: It automatically extracts data from various PDF formats and normalizes it into a usable format. This is a huge time-saver, especially if you often work with financial reports. This allows you to instantly transform unstructured data into a structured format suitable for further analysis.

· Financial Ratio Calculation and Trend Analysis: It calculates important financial ratios and analyzes trends over time, providing insights into a company's performance. It helps you understand a company's financial health, allowing for informed decision-making.

· Health Score Generation: It generates a health score that summarizes a company's financial health, making it easy to understand. It provides a quick snapshot of a company's financial situation, saving time and effort.

· Real-time Monitoring and Alerts: It monitors new filings and director changes, sending alerts about significant events. You can stay updated about the company's financial health and be notified of any changes. This helps to quickly spot risks or opportunities.

Product Usage Case

· Due Diligence: A venture capital firm uses Duebase AI to quickly assess the financial health of potential investment targets, streamlining their due diligence process. This allows them to make faster, more informed investment decisions.

· Risk Management: A bank integrates Duebase AI to monitor the financial health of their loan portfolio, receiving alerts when a company's health score deteriorates. They can proactively manage their credit risk and mitigate potential losses.

· Market Research: A market research firm uses Duebase AI to analyze the financial performance of companies within a specific industry, identifying market trends and opportunities. They can gain valuable insights into market dynamics and make strategic recommendations.

3

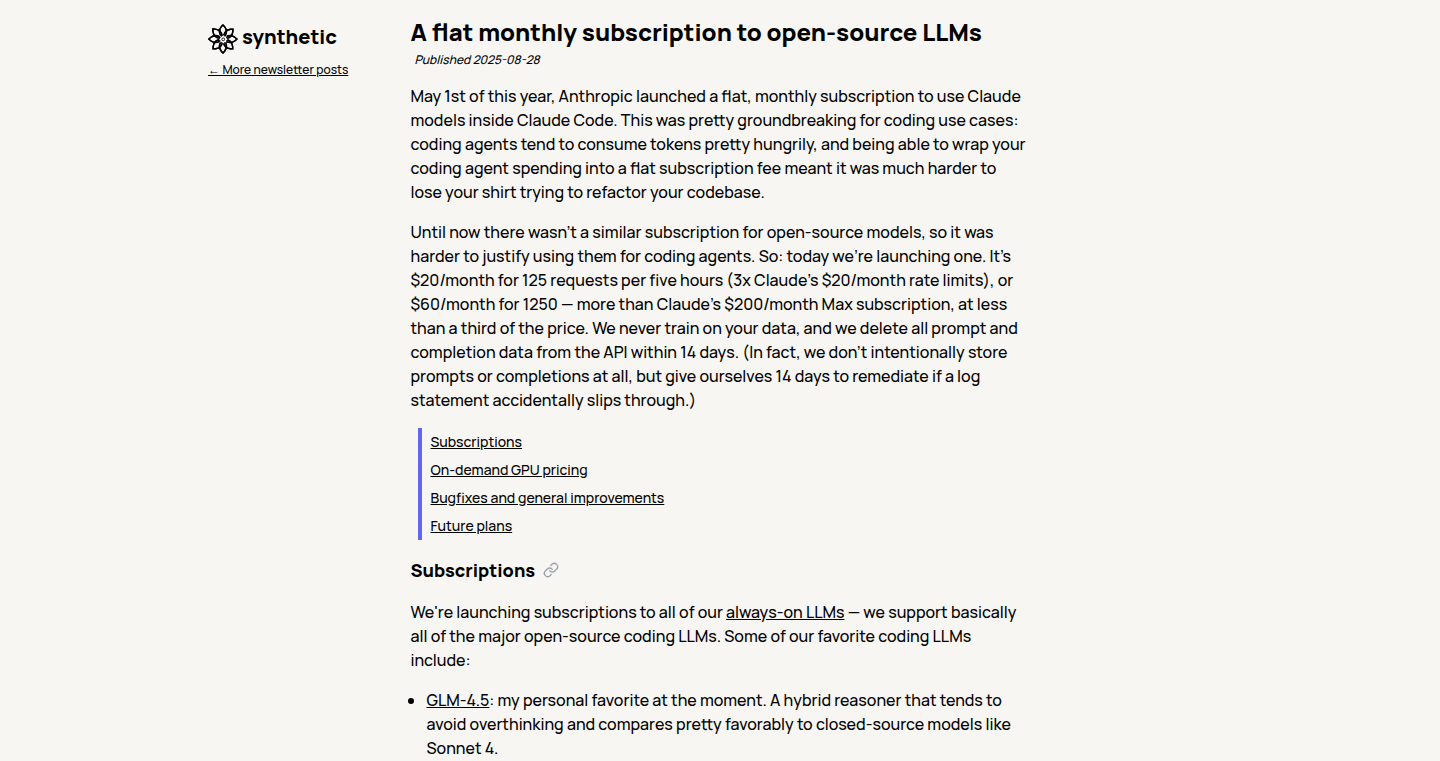

Private LLM Subscription: Your Privacy-Focused AI Companion

Author

reissbaker

Description

This project offers a flat monthly subscription for access to open-source Large Language Models (LLMs), focusing on privacy. It's designed as a privacy-conscious alternative to services like OpenAI, offering higher rate limits than some competitors. The core innovation lies in providing a readily accessible, competitively priced, and privacy-respecting LLM service, allowing developers to integrate powerful AI capabilities without compromising user data or facing stringent rate limits.

Popularity

Points 21

Comments 13

What is this product?

This service provides access to open-source LLMs via a monthly subscription, much like subscribing to a cloud service. The key is its emphasis on privacy. It's built to function seamlessly with various compatible tools and clients, acting like a plug-and-play AI engine. The innovation is in offering this service at a competitive price with enhanced rate limits and a strong focus on user data security. So what? This means developers get access to cutting-edge AI without sacrificing control over their data or facing restrictions.

How to use it?

Developers can use this service by integrating it with compatible API clients such as Cline, Roo, KiloCode, or Aider. This involves simply swapping the OpenAI API endpoint in the client with the one provided by this service. It's designed to be compatible with any OpenAI-compatible client. This allows developers to tap into the power of LLMs for tasks like text generation, code completion, and more. So what? It provides developers an easy-to-integrate AI solution for their projects, offering a smooth integration with existing tools and reducing the barrier to entry for AI-powered applications.

Product Core Function

· LLM Access: Provides access to powerful open-source LLMs. This helps with tasks like content creation, data analysis, and chatbot development. So what? It allows developers to infuse AI into their projects rapidly without the need to build and manage models.

· Flat Monthly Subscription: Offers a predictable, flat-fee pricing model. This avoids unpredictable usage charges and budget surprises. So what? It enables developers to forecast costs and plan their budgets more effectively, allowing them to commit to a certain amount of AI usage per month.

· Privacy-Focused: Emphasizes user privacy and data security. This ensures user data is protected and handled responsibly. So what? It allows developers to build applications that take user privacy seriously, attracting privacy-conscious users and avoiding potential legal issues.

· High Rate Limits: Provides rate limits exceeding those of some competitors (like Claude). This ensures smooth operations and less downtime. So what? This reduces the risk of service interruptions, ensuring better performance and responsiveness for your AI-driven applications.

Product Usage Case

· Content Creation: Use the LLM to generate blog posts, articles, or marketing copy. A developer can integrate the service to create a content generator within their application, automatically producing various types of text. So what? It streamlines content production and reduces the time and cost of creating high-quality content.

· Code Completion: Developers can leverage the LLM to get code suggestions and automatic code completion, especially helpful in tools like Aider or similar development environments. So what? It increases developer productivity and reduces coding errors.

· Chatbot Development: Integrate the LLM into a chatbot for customer service, support, or interactive applications. The service provides a back-end for building conversational interfaces that can answer questions and assist users. So what? It provides a base to make smart and responsive chatbots without needing to build their own natural language understanding engine.

4

Grammit: Local LLM-Powered Grammar Guardian

url

Author

scottfr

Description

Grammit is a Chrome extension that uses a Large Language Model (LLM) to perform grammar checks directly on your computer, without sending your writing to any external servers. This means your writing stays private. Beyond basic grammar and spell checks, Grammit can correct factual errors and offer writing suggestions. It leverages cutting-edge web technologies like the Chrome Prompt API, Anchor Positioning API, CSS Custom Highlights API, and the CSS sign() function to provide a smooth and efficient user experience, showcasing innovative use of browser capabilities.

Popularity

Points 26

Comments 4

What is this product?

Grammit is a Chrome extension that acts as your personal grammar checker, but unlike many others, it doesn't send your text to the cloud. It uses a powerful AI model that runs on your own computer to analyze your writing. The cool part is it not only catches spelling and grammar mistakes but also helps you improve your writing style, even correcting some factual errors. Think of it as having a smart editor right inside your browser. So this is great for anyone who values privacy and wants to ensure their writing is polished.

How to use it?

You install Grammit as a Chrome extension. Once installed, it integrates seamlessly into your browsing experience. As you type in text fields, like emails, documents, or social media posts, Grammit automatically analyzes your writing and highlights errors or offers suggestions in real-time. You can then review and accept the changes. Developers can use this as a blueprint to build similar applications, utilizing the described APIs. For example, if you were building an app that needs to process text, you can learn how to do it locally, ensuring user privacy.

Product Core Function

· Local Grammar Checking: This is the main function; it scans your text and checks for grammatical errors and spelling mistakes, all without sending your text to a server. This means your data is safe and protected, offering a great privacy-focused solution.

· Fact Checking and Correction: The LLM powering Grammit can do more than just basic grammar. It can identify and correct inaccurate statements, making sure your content is not only well-written but also accurate. This is perfect for writers and researchers.

· In-Page Writing Assistant: Grammit includes a feature that can rephrase or draft new text right where you're writing. It helps to improve your writing style, providing suggestions to make your content more engaging and clearer. This is incredibly helpful for overcoming writer's block or refining your language.

· Utilization of Chrome Prompt API: This leverages the new Prompt API to directly communicate with the local LLM. So this enables real-time analysis and feedback on the text, without the need for external servers.

· Use of Anchor Positioning API, CSS Custom Highlights API, CSS sign() function: These are advanced web technologies that are used to create user interface elements within the browser. These APIs helps Grammit to integrate seamlessly with the page. These are useful because they help the app to appear better and more seamless with the page.

Product Usage Case

· Secure Email Composition: Use Grammit to draft and review emails with confidence, knowing that your private messages will never be sent to an external server for grammar checks. This ensures your sensitive business communications remain private.

· Academic Writing Assistance: Students and academics can use Grammit to check papers and research documents, ensuring accuracy and clarity without sharing their work with third-party services. So your research data is private.

· Content Creation and Blogging: Bloggers and content creators can use Grammit to refine their posts and articles. It offers suggestions for better writing. So you can create high-quality content and improve your SEO.

· Developer Inspiration: Developers can use Grammit's architecture as a foundation to build similar local-first AI-powered tools, leveraging the Chrome Prompt API and other web APIs to create innovative applications that prioritize user privacy and data security. This can be used to create apps to transcribe audio locally, summarizing large documents, etc.

· Document Review and Editing: Professionals in any field can use Grammit for document review and editing. You can improve the quality of your documents, and avoid embarrassing typos.

5

Yoink AI: Context-Aware AI Text Editor for macOS

Author

byintes

Description

Yoink AI is a macOS application designed to integrate AI-powered text editing directly into your existing workflow. It tackles the common problem of needing to constantly switch between applications and copy-paste text to use AI for simple edits. The innovation lies in its ability to understand the context of the text field you're working in and provide inline suggestions, allowing for a more seamless and efficient editing experience. This minimizes disruption and streamlines how you use AI for writing and editing.

Popularity

Points 19

Comments 8

What is this product?

Yoink AI is like having an AI assistant integrated directly into any text field on your Mac. It works by understanding the context of what you're typing and offering suggestions. Unlike chatbots that require you to copy and paste text, Yoink AI operates directly within your app. It also lets you train custom 'voices' based on your own writing style, so the AI's suggestions feel more personalized. So, you can get help with writing and editing without leaving the app you are using. So this is useful because it saves you from having to constantly switch apps and paste your text into a chat to edit.

How to use it?

To use Yoink AI, you simply trigger it with a hotkey (⌘ Shift Y) within any text field. Yoink AI then analyzes the text and provides inline suggestions, which you can accept or reject. You can also customize the AI's writing style by training it on your own writing samples. This means you can use it in any app where you type, like email, word processors, or even code editors. So you can use it anytime you need to edit text, like fixing grammar, changing tone, or generating different versions of your content.

Product Core Function

· Contextual Awareness: Yoink AI automatically understands the text field's context, which means it knows what you are working on without you having to explain it. This reduces the need for manual input and improves the efficiency of the editing process. For you, this means you don't need to manually specify what you want the AI to do.

· Custom Voice Training: You can train Yoink AI on your own writing style, creating a personalized 'voice' for the AI to use. This ensures that the AI's suggestions match your writing style, making the output more natural and less generic. You can now use this to get more tailored, human-sounding edits that fit your personal style.

· Inline Editing with Redline Suggestions: Instead of just generating text, Yoink AI offers suggestions as 'redline edits' that you can accept or reject. This gives you full control over the edits, allowing you to review and refine them as needed. So, it gives you complete control of the output without having to re-write the whole text, and it lets you review and change any editing by the AI.

· Workflow Integration: The app is designed to integrate directly into any text field in any app on your Mac. This seamless integration minimizes disruption to your workflow and makes it easy to get help with writing and editing tasks wherever you are working. This lets you get editing help without ever leaving your existing apps.

· Hotkey Activation: The use of a simple hotkey (⌘ Shift Y) makes it quick and easy to activate Yoink AI, ensuring minimal interruption to your workflow. It lets you get the AI suggestions in a second.

Product Usage Case

· Email Editing: You're writing an email and need help rephrasing a sentence to sound more professional. Using the hotkey, Yoink AI can suggest alternative phrasing directly within your email app, such as Gmail or Outlook. So, now you can fix your emails without having to copy and paste the text to an AI.

· Content Creation: You're drafting a blog post and want to improve a paragraph. Yoink AI can analyze the text and suggest improvements to the tone, clarity, or style, all within your word processor. So, you can improve your content quickly.

· Code Comments: You're writing code and want to add clear and concise comments. Yoink AI can help you write better comments directly in your code editor. So, the AI can help you to document your code better and save your time.

· Social Media Posts: You're preparing a social media update and need help optimizing the text. Yoink AI can suggest variations to increase engagement, all within your social media platform's text field. So, you can use AI to create engaging social media content faster.

6

MCPcat: Effortless Observability for MCP Servers

Author

kashishhora

Description

MCPcat is a free, open-source library designed to simplify logging and observability for MCP (likely referring to Multi-Cloud Platform) servers. It addresses the common challenges of integrating monitoring tools by providing a one-line solution that leverages OpenTelemetry, a standard for collecting and exporting telemetry data. The library categorizes events within a user session and sends them to your chosen third-party provider like Datadog or Sentry, allowing developers to understand user behavior and debug issues quickly. The library also offers a dashboard for visualizing user journeys, aiding in understanding how users interact with the MCP server.

Popularity

Points 13

Comments 3

What is this product?

MCPcat is a library that makes it super easy to monitor what's going on inside your MCP server. It works by "listening" to what your server is doing and sending this information to tools you already use, like Datadog or Sentry. The cool part is it uses something called OpenTelemetry, which is like a universal translator for sending data to these tools. It also connects all the actions a user takes, so you can see what they are doing step by step. So you can understand the user journey and debug problems in a more efficient way.

How to use it?

Developers can integrate MCPcat into their MCP server with a single line of code using the provided Python or TypeScript SDKs. For example, in the code, you'd use a command similar to `mcpcat.track(serverObject, {...options…})`. This sets up listeners that automatically track events and send them to the configured monitoring tools. Developers need to have an account with a service like Datadog or Sentry. After installing the SDK, simply add the track function and then deploy to your server environment. This makes debugging much easier.

Product Core Function

· One-Line Integration with OpenTelemetry: Allows you to quickly add monitoring to your server without complex setup. This is valuable because it saves developers a lot of time and effort when setting up monitoring.

· User Session Categorization: Groups events into working sessions to understand how a user interacts with the system. This helps developers track what a user did during a session, making it easier to find the root cause of any issues.

· Support for Common Monitoring Vendors (Datadog, Sentry): Direct integration with popular tools used by many developers. This eliminates the need to build custom integrations for each vendor, simplifying the setup process.

· Data Redaction: Provides the option to protect sensitive data. Useful to comply with privacy regulations and avoid logging personal information.

· User Journey Visualization Dashboard: An optional dashboard for visualizing user behavior in more detail. This gives you a deeper understanding of how users are actually using your service, so you can optimize it for their experience.

Product Usage Case

· Debugging Production Issues: A developer notices a sudden increase in errors on their MCP server. By using MCPcat, they can quickly identify which user sessions were affected and pinpoint the exact actions that triggered the errors. This dramatically reduces the time to resolve the problem.

· Performance Optimization: A team wants to improve the performance of their MCP server. Using the insights from MCPcat, they can identify the slowest parts of the user journey and optimize the code to improve response times and user experience. For example, a database query might be taking too long, so it can be optimized.

· Understanding User Behavior: A product manager wants to understand how users are using a new feature. They use MCPcat's dashboard to visualize user journeys and identify common paths, allowing them to adjust the feature for improved usability. This helps improve the overall product.

· Monitoring Security Events: A security team wants to monitor for unusual activity on the server. By integrating with MCPcat and configuring appropriate filters, they can track events related to user logins, access to sensitive data, and other security-related actions. This provides a complete audit trail.

7

GrowChief - Open-Source Social Media Outreach Tool

Author

nevodavid

Description

GrowChief is an open-source tool designed to help users with social media outreach. It tackles the challenge of managing and optimizing social media engagement by providing features for tracking, analyzing, and automating interactions with potential followers. The innovation lies in its open-source nature, allowing developers to customize and extend its capabilities. It focuses on automating repetitive outreach tasks, helping users streamline their social media strategies, and providing insights into what works best for them.

Popularity

Points 8

Comments 2

What is this product?

GrowChief is a software that helps you reach out to people on social media. Instead of manually messaging people, GrowChief helps you automate and track your outreach. Its innovative aspect is that it's open-source, meaning anyone can see how it works and even change it to fit their specific needs. It uses various APIs (Application Programming Interfaces) to connect with different social media platforms, allowing it to send messages, analyze responses, and help you understand what kind of content and interactions get the best results.

How to use it?

Developers can use GrowChief by downloading the code from its repository and setting it up on their own server or computer. Then, they can connect it to their social media accounts. The tool would automate tasks such as sending personalized messages, tracking user engagement metrics, and analyzing which strategies are most effective. Imagine setting up a campaign to find new customers or collaborators; GrowChief would handle the repetitive tasks, like sending introductory messages, so the developer can focus on building relationships and refining their strategy. Its modular design enables developers to integrate it into their existing workflows or build custom features. So if you are a developer who wants to build your social media reach or build and sell similar tools, you could benefit from it

Product Core Function

· Automated Message Sending: This lets you send personalized messages to potential followers or contacts on social media, saving you time and effort. So you won't have to manually send messages to hundreds of people.

· Engagement Tracking: It monitors how people interact with your outreach efforts, such as who replies, clicks on your links, or follows you. This helps you measure your outreach success. Knowing who’s interested helps you focus your time and energy.

· Performance Analytics: GrowChief analyzes the data it collects to show you what outreach strategies are working best. For example, it shows which message formats and content types get the most responses. This lets you fine-tune your approach for maximum impact and learn what strategies produce results.

· Platform Integration: The tool integrates with several social media platforms through APIs, handling the technical steps of connecting to the social media accounts and automating actions. You don't need to get into the nitty-gritty details of how these platforms work.

Product Usage Case

· Marketing Automation: A marketing agency could use GrowChief to automate their outreach campaigns on platforms like Twitter or LinkedIn. They can automatically send messages to potential clients. By tracking engagement metrics, they can see which messages and strategies result in the most leads, improving their marketing ROI (Return On Investment).

· Lead Generation: A sales team might use GrowChief to reach out to potential customers on social media. They can automatically send out tailored messages. They can follow-up automatically based on the recipients' response to build their sales pipeline and drive growth.

· Community Building: An open-source project lead can use GrowChief to connect with potential contributors. It can send out personalized invitations to join their project, encouraging collaboration and building the project's community. They would be able to track which outreach methods worked best and adjust their strategies accordingly.

8

Runcell: An AI Agent for Jupyter Lab

Author

loa_observer

Description

Runcell is an AI agent designed to work within Jupyter Lab, a popular environment for data scientists and programmers. Its key innovation is its ability to understand the context of your Jupyter notebook – including data, charts, and existing code – and then write code for you. Unlike other AI tools that treat Jupyter notebooks as static files, Runcell interacts directly with the Jupyter kernel, giving it access to real-time information and allowing it to edit and execute specific code cells. This makes it a powerful tool for automating tasks, accelerating development, and exploring data interactively.

Popularity

Points 8

Comments 1

What is this product?

Runcell is like having a smart assistant inside your Jupyter Lab. It's built on the principles of AI agents, which are programs that can perform actions and make decisions based on their understanding of the environment. In Runcell's case, the environment is your Jupyter notebook. The AI agent can understand the contents of your notebook (like the data you're working with, the charts you've created, and the code you've already written), and then use this knowledge to write code for you. It can also access various tools like the ability to read/write files and search the web. So what? This allows you to automate repetitive tasks, quickly prototype ideas, and more easily explore your data. So this allows you to automate repetitive tasks, quickly prototype ideas, and more easily explore your data. Runcell’s direct access to the Jupyter kernel is the key innovation, which allows for deeper understanding and control compared to tools that treat notebooks as static documents.

How to use it?

Developers can easily install Runcell with a simple `pip install runcell`. Once installed, it integrates directly into your Jupyter Lab environment. You can then interact with it by giving it natural language instructions, such as 'Write code to plot this data' or 'Edit this cell to fix the error'. Runcell will then analyze your notebook, generate the necessary code, and execute it. The agent can also access and modify your Jupyter environment, meaning it can read files, execute commands, and interact with the kernel. So what? This is useful for data scientists who want to automate data analysis tasks, or developers looking for a powerful code generation and debugging assistant directly within the Jupyter environment.

Product Core Function

· Contextual Code Generation: Runcell understands the context of your notebook (data, charts, code) and uses that to generate relevant code. So what? This saves you time by automating the code writing process and helping you avoid manually writing code, especially when working with complex datasets or calculations.

· Direct Kernel Interaction: It interacts directly with the Jupyter kernel, allowing it to access real-time information and modify the environment. So what? This provides greater control and flexibility compared to tools that just process static notebook files, enabling more dynamic interaction with your code.

· Cell Editing and Execution: Runcell can edit and execute specific cells within your notebook. So what? This allows for automated debugging, refactoring, and iterative development directly within your Jupyter workflow.

· Built-in Tools: Runcell has built-in tools for file I/O, web searching, and other common tasks. So what? This enables the agent to perform a wider range of tasks, such as fetching data from the internet or reading/writing files, all within the Jupyter environment, making it a more self-sufficient and powerful assistant.

Product Usage Case

· Data Exploration: A data scientist can use Runcell to automatically generate code to visualize a dataset by simply instructing it to 'Create a scatter plot of X and Y'. So what? This quickly visualizes data for better understanding and identifying patterns.

· Code Debugging: If a developer has an error in their code, Runcell can analyze the code and suggest fixes, editing the cell directly. So what? This accelerates the debugging process and helps developers quickly identify and fix errors.

· Automated Reporting: A user can instruct Runcell to 'Write a report summarizing the key findings and generate the appropriate charts'. So what? This automates the creation of reports from data, saving time and effort in the reporting process.

· Prototype Development: A developer can use Runcell to quickly prototype new features in their Python code by using natural language instructions, such as 'Add a function to calculate the moving average'. So what? This enables rapid prototyping, as it enables you to quickly explore new coding ideas and reduces the time spent writing boilerplate code.

9

oLLM: Optimized Large Language Model Inference for Consumer GPUs

Author

anuarsh

Description

oLLM is a project focused on accelerating the inference of Large Language Models (LLMs) with a large context window on consumer-grade GPUs. It tackles the computational challenges of processing long sequences of text, which is crucial for tasks like summarizing long documents or engaging in extended conversations. The innovation lies in optimizing the LLM inference process to make it more efficient on affordable hardware, opening up possibilities for developers to experiment with large-context LLMs without needing expensive infrastructure. This is achieved through clever techniques like optimized memory management and efficient kernel implementations.

Popularity

Points 3

Comments 6

What is this product?

oLLM is a software tool that speeds up the process of getting answers or generating text from powerful language models, especially when dealing with long pieces of text. The innovation is in how it handles the information inside your computer. Think of it like organizing your desk (the GPU's memory) to work more efficiently. It uses smart techniques to minimize the time it takes to load and process the text, allowing for faster results on normal computers. This means it can handle tasks that usually require powerful servers, making LLMs more accessible.

How to use it?

Developers can use oLLM by integrating it into their existing LLM applications or creating new ones. It acts as an optimized engine for running LLMs. You would load your chosen LLM, feed it your long text input, and oLLM will handle the heavy lifting of processing it quickly. The integration involves using the oLLM libraries within your code, pointing it towards your chosen model and data. This makes it ideal for applications where long-form text analysis or generation is key, such as document summarization tools, advanced chatbots capable of understanding long conversations, or content generation for complex topics.

Product Core Function

· Optimized Kernel Implementations: oLLM employs custom-built routines (kernels) that are highly efficient in performing the mathematical operations required by LLMs. This leads to significantly faster processing compared to generic implementations. So this means faster results when you give the LLM a task.

· Memory Management Optimization: The system efficiently manages how the computer's memory is used when processing the text. This optimization reduces the overhead of moving information around, making the process faster. This makes the system much more efficient at handling large amounts of text.

· Large Context Handling: It is particularly designed for tasks that involve a large context, meaning it is able to efficiently handle and process lengthy pieces of text. This makes it well-suited for tasks that require understanding the nuances of extensive documents or involved conversations.

· Consumer GPU Compatibility: oLLM is specifically designed to run on standard consumer-grade GPUs, making it accessible to a wider audience. This removes the barrier of needing expensive hardware to work with powerful LLMs, allowing more people to experiment with them.

Product Usage Case

· Document Summarization: A developer can use oLLM to build a tool that quickly summarizes long research papers or legal documents. By using oLLM's optimized processing, the summarization happens faster, saving valuable time. So you can instantly get the gist of a lengthy document.

· Advanced Chatbots: Create a chatbot that can understand and respond to long and complex conversations. oLLM allows the chatbot to maintain context across many turns, giving it a much better understanding of the conversation. So you get smarter and more comprehensive chatbots.

· Content Creation: Use oLLM to help in creating long-form content like articles or scripts. It helps by making the process of generating text from a large amount of background information faster and easier. So you can generate detailed and coherent content more quickly.

· Research Applications: Researchers can use oLLM to rapidly analyze large datasets of text, like customer reviews or social media feeds. This can lead to quicker insights and discoveries. So you can process massive amounts of textual data more effectively.

10

Linkfy: On-Device URL Cleaner

Author

muhammetarda

Description

Linkfy is an iOS app that cleans up messy URLs by removing tracking parameters (like utm_*, fbclid) directly on your device. It's designed to protect your privacy by stripping out the tracking information added by websites and apps. Instead of sending your data to a server, Linkfy processes the links locally, ensuring your browsing history stays more private. This project tackles the problem of excessive URL tracking, giving users more control over their data and improving the readability and shareability of links.

Popularity

Points 3

Comments 4

What is this product?

Linkfy is a Swift package and iOS app that intelligently removes tracking junk from URLs. When you share a link, the app analyzes it on your iPhone and eliminates the unnecessary tracking codes without altering the core functionality of the link. The core innovation lies in its on-device processing, eliminating the need for any server-side component, increasing privacy and speed. So it's a more private, faster and simpler way to share URLs without the tracking clutter.

How to use it?

Developers can integrate Linkfy into their apps by using the Swift package. The main way users interact with it is via the iOS share sheet. When a user shares a link, they can choose Linkfy, which then cleans the link. You can also use it directly within the Linkfy app by pasting the link. This approach provides seamless integration into existing iOS workflows. It's designed for scenarios where privacy and clean links are essential. This means you can share clean links directly from the apps you already use, without changing your habits.

Product Core Function

· URL Parsing and Cleaning: The core function is to identify and remove tracking parameters from URLs, such as utm_campaign, fbclid, or other tracking parameters. Value: This enhances privacy by reducing data collection. It also makes URLs shorter and easier to read and share. Scenario: Share a news article with a friend, keeping the content but removing the website’s tracking data.

· On-Device Processing: The app performs all operations locally on the user's device without needing a server. Value: This feature significantly boosts user privacy and speed as no data is transmitted off the device. It enhances security by eliminating potential server-side vulnerabilities. Scenario: Cleaning a URL before sending it via SMS, preventing any tracking information from being sent to another server.

· Share Sheet Integration: The app offers a share sheet extension, allowing users to clean URLs directly from any iOS app that supports sharing. Value: Makes it easy for users to clean links anywhere on their phone. It ensures that users do not need to open the app to benefit from the cleaning. Scenario: Clean a link in Twitter before sharing it with a friend, keeping the content while removing tracking information.

· Swift Package Integration: The project is packaged as a Swift package, facilitating developers to embed URL cleaning features into their applications. Value: It allows developers to easily include the link cleaning feature in their own apps, promoting wider use and improving user privacy across various applications. Scenario: A developer integrating Linkfy into a note-taking app, automatically cleaning any web links that are pasted into a note, providing a cleaner and safer experience for the user.

Product Usage Case

· Privacy-Focused Browsing: A user regularly shares articles and web content, but is concerned about their data privacy. They utilize Linkfy via the share sheet from their browser, automatically cleaning the URLs before sharing them via email or social media. This action reduces the tracking of their browsing activities.

· Developer Integration: A mobile app developer integrates Linkfy into their app for content sharing. Users within the app can share articles to social media or with friends. Before the links are shared, they are cleaned by Linkfy. This improves the user experience by eliminating clutter, and gives the users greater privacy, and boosts the app’s overall reputation.

· Secure Communication: A user is sending links containing sensitive information to a colleague. Before sharing the link via a secure messaging app, they clean the URL using Linkfy. This assures that no tracking data is shared, thus reinforcing the privacy and confidentiality of the communication.

11

Boot.dev Training Grounds: Interactive Backend Learning Platform

Author

wagslane

Description

Boot.dev Training Grounds is an interactive learning platform designed to teach backend development concepts in a hands-on manner. It utilizes a unique 'sandbox' environment where users can write and execute code directly within their browser, allowing for immediate feedback and iterative learning. The project focuses on making complex backend concepts accessible through practical exercises and real-world problem-solving, minimizing the initial setup burden and maximizing the time spent on actual coding. So this helps you jump right into building things.

Popularity

Points 4

Comments 3

What is this product?

This platform acts as a virtual playground for learning backend development. It provides pre-configured environments and challenges that let you write and test code directly. Instead of setting up a complex development environment on your computer, you can code in your browser. The innovation lies in its interactive, guided approach, turning theoretical concepts into practical coding experiences. It also offers instant feedback to students. So it takes the pain out of configuring your development environment and gets you coding faster.

How to use it?

Developers can access the platform through their web browser. You typically select a lesson or a challenge, read the instructions, write the code in the built-in editor, and then run it. The platform will then provide feedback based on the code's execution and output, helping you understand the concepts and identify errors. You can integrate it as your learning platform to practice backend concepts and explore different technologies. So you can easily build your skills and learn by doing.

Product Core Function

· Interactive Code Editor: Allows users to write and execute code directly within the browser. This eliminates the need for local setup and allows for immediate feedback on coding efforts. The value is in quick iteration and rapid learning.

· Pre-configured Environments: Provides pre-configured coding environments for specific backend technologies and tasks, such as Python, Go, or database interactions. This means you don't need to install anything initially. This value is in reducing the setup time and letting you focus on learning.

· Hands-on Exercises and Challenges: Delivers guided exercises and real-world challenges to reinforce learning. This approach turns abstract concepts into practical skills. The value is in applying knowledge to real-world scenarios.

· Instant Feedback and Assessment: Offers instant feedback on the code's execution, helping users identify and correct errors immediately. The value is in speeding up the learning loop, letting developers fix problems faster and learn from mistakes in real-time.

Product Usage Case

· Learning API Development: A developer could use the platform to learn how to build REST APIs using Python and the Flask framework. The platform provides a pre-configured environment and exercises that guide you through the creation of API endpoints, request handling, and response formatting. So you build real APIs and learn how they work.

· Database Interactions: A user can use the platform to practice connecting to and querying databases such as PostgreSQL or MySQL. The platform provides the necessary libraries and tools, and the user writes the code that interacts with the database to retrieve, insert, and update data. So you get to explore how to use databases in your backend.

12

Gensee Search Agent: Smarter Web Search for AI

Author

bobby_zhu

Description

Gensee Search Agent simplifies web search for AI applications. It tackles the tedious process of finding information online by intelligently handling search, crawling websites, extracting relevant content, and dealing with errors. This frees up AI developers to focus on building their core product, instead of wrestling with the complexities of web data retrieval. It offers a single API call to handle the complexities of web search, built-in error handling and efficient parallel search. This results in improved accuracy and faster development for AI agents. So, this is useful because it makes building AI applications that rely on web data much easier and more effective, saving developers time and effort.

Popularity

Points 7

Comments 0

What is this product?

Gensee Search Agent is a tool that simplifies web search for AI agents. It uses a single API call to handle the complexities of web searching, web crawling and browsing. It includes features like built-in error handling, parallel search (breadth-first approach) to eliminate bad results quickly, and goal-aware extraction to get content that is highly relevant to the query. So, this is essentially a smart web search engine that takes the grunt work out of finding information online for your AI projects.

How to use it?

Developers can integrate Gensee Search Agent into their AI applications using a simple API call. They provide a search query, and the agent handles all the underlying complexities of web search, crawling, and extraction. The agent returns structured, relevant data, directly usable by downstream tasks. This is useful for any AI agent that needs to gather information from the web, such as those that answer questions, summarize information, or perform tasks based on online content.

Product Core Function

· Web Searching, Crawling, and Browsing: This feature allows the AI agent to find and navigate websites based on a search query. It's like having a built-in web browser specifically designed for AI. This is valuable because it provides access to a vast amount of information that AI applications can use.

· Built-in Error Handling, Retries, and Fallbacks: This handles common issues during web crawling, such as broken links or temporary website outages. It automatically retries failed operations and uses fallback mechanisms to ensure the agent continues to function. This improves the reliability of the AI agent, preventing it from crashing or providing incomplete results.

· Breadth-First Search Approach: This method searches multiple websites simultaneously and quickly identifies irrelevant results. It allows the AI agent to explore the web more efficiently. This helps the AI agent to find the right information quickly and efficiently, saving time and resources.

· Goal-Aware Extraction: This focuses on extracting only the most relevant content from the search results, tailor-made for downstream tasks. It is like having a smart filter that ensures the AI agent only gets the information it actually needs. This dramatically improves the accuracy and performance of the AI agent by minimizing data overload and focusing on what matters.

Product Usage Case

· Improved GAIA benchmark accuracy: Gensee Search Agent has improved the accuracy of the Owl AI model (an open-source implementation of Manus) by 23%. This demonstrates the tool's effectiveness in improving the performance of existing AI models. So this is useful for researchers and developers working on cutting-edge AI applications, helping them to push the boundaries of what's possible.

· Boosted an AI agent's accuracy by 40% for a San Diego developer: The tool helped a developer improve the accuracy of their AI agent, showing its real-world applicability. So this is useful for developers building AI applications that require accurate and reliable web search capabilities. The result is a direct impact on the end product.

13

Simple PDF Scanner - A Streamlined, Subscription-Free Mobile Scanner

Author

Akzid

Description

This project, Simple PDF Scanner, is a straightforward iOS application designed for scanning documents into PDF format. It addresses the common frustration of bloated scanner apps that often require recurring subscriptions. The core innovation lies in providing a simple, fast, and functional scanning experience without the unnecessary features or payment models found in many commercial alternatives. It leverages technologies like Optical Character Recognition (OCR) to make scanned text searchable, along with password protection, custom file naming, and import options from both camera and photos, all while supporting A4 paper sizes. This project offers a refreshing take on document scanning by prioritizing user experience and avoiding the pitfalls of subscription-based apps. So, this app gives you a hassle-free way to digitize documents on your phone without any hidden costs or complex interfaces.

Popularity

Points 4

Comments 2

What is this product?

Simple PDF Scanner is a mobile app that converts physical documents into digital PDF files. It uses your phone's camera to capture images of documents, then processes them. Key technology includes OCR, which means it can recognize text in the scanned images, making the text searchable and editable. It also incorporates features like password protection for your PDFs and supports different paper sizes. The main innovation is its simplicity and focus on core functionality, providing a user-friendly experience without the annoyance of subscription fees. So, it's a convenient and cost-effective way to manage your documents digitally.

How to use it?

Developers can use Simple PDF Scanner as a model for building their own simple, feature-rich applications without the complexities of monetization. They can examine how features like OCR and PDF generation were integrated within the app and learn from the development choices made to prioritize user experience. Also, it can serve as a benchmark to compare with existing scanning apps and see how features can be streamlined. Think of using it on your iPhone to quickly scan receipts, documents, or any paperwork and create password-protected PDFs that you can easily share or store. So, developers can learn how to create intuitive apps and users get a better document scanning experience.

Product Core Function

· OCR (Optical Character Recognition): This feature allows the app to recognize text within scanned images. It means you can search for specific words within your scanned documents, making it much easier to find what you need. This technology is crucial for turning static images into searchable and usable text. So, you don't have to manually re-type documents.

· Password-Protected PDFs: The app supports password-protected PDFs, adding a layer of security to your scanned documents. This is especially useful for sensitive information. So, you can securely share confidential documents.

· Custom Filenames: You can give your scanned files custom names, which is helpful for organizing and easily finding them later. This improves document management and organization. So, you can name files for easy identification.

· Camera & Photo Import: The app allows you to scan documents using your camera or import images from your photo library, giving you flexibility in how you capture documents. This feature allows easy import from any existing photo or immediate capture. So, you can choose the most convenient way to scan your documents.

· A4 Support: The app supports A4 paper sizes, ensuring that your scanned documents are correctly formatted. This makes the app suitable for international use. So, your documents will be formatted correctly, no matter where you are.

Product Usage Case

· A small business owner needs to scan receipts and invoices. They can use Simple PDF Scanner to quickly digitize their paperwork. The OCR feature will allow them to search through the scanned documents for specific items or dates. Password protection keeps the financial data secure. So, it simplifies accounting and record-keeping.

· A student needs to scan notes and study materials. They can use Simple PDF Scanner to scan their notes and store them digitally, creating searchable PDFs. The app's simplicity ensures that the process is quick and easy, saving them time and effort. So, it provides a convenient digital study library.

· A real estate agent needs to scan contracts and property documents. They can use the app to scan all necessary documents and password-protect them. The custom filenames will allow for organized storage, and the A4 support will ensure formatting across all documents. So, it streamlines document handling and protects sensitive information.

14

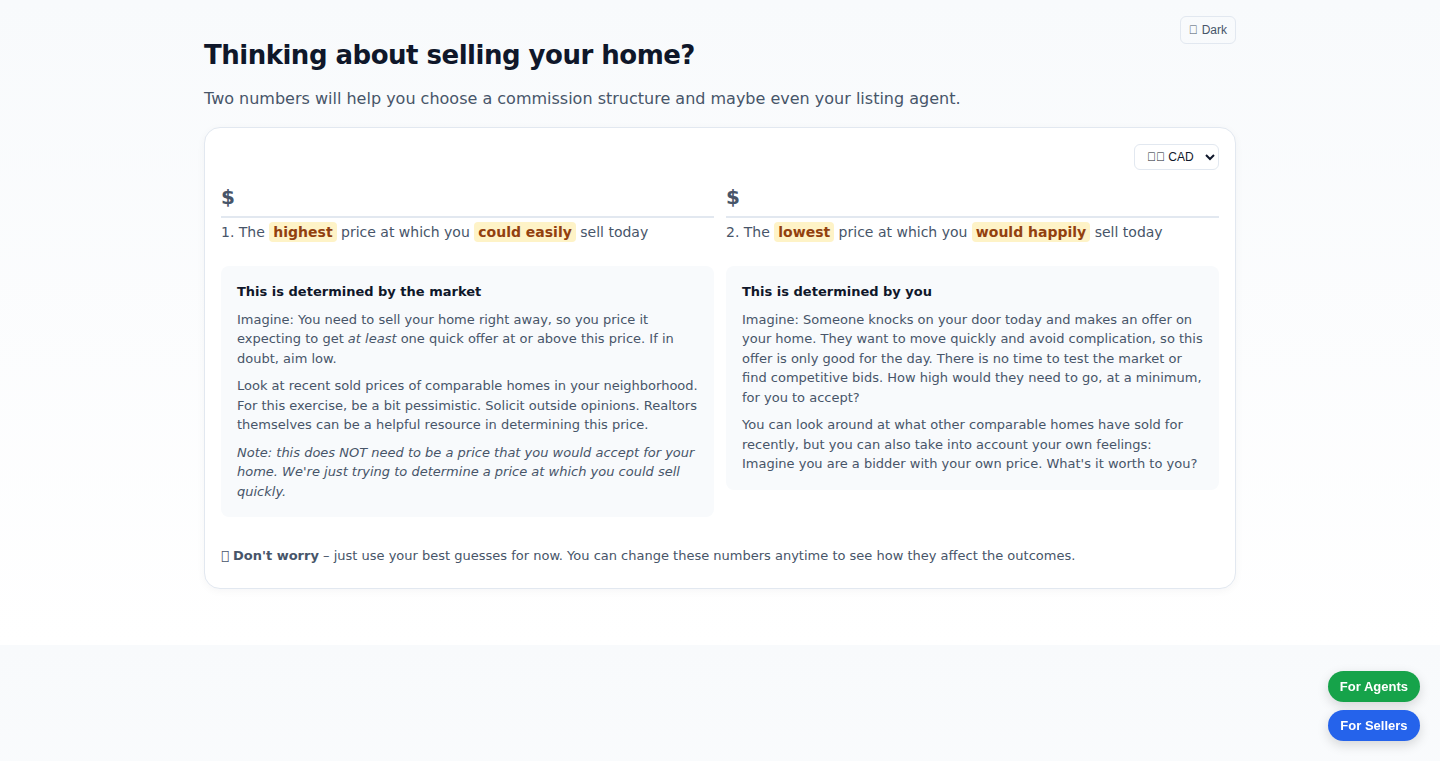

ProgressiveCommission Calculator: Aligning Agent Incentives for Home Sales

Author

ajcatton

Description

This project is a dynamic calculator that helps home sellers understand and design a "Progressive Commission" structure for their real estate agents. The core idea is to incentivize agents to achieve higher sale prices by rewarding them more for exceeding a certain price point. This addresses the common issue where agents might be motivated to quickly close a deal, even at a lower price, under traditional fixed-percentage commission models. The project's innovation lies in providing a flexible tool for sellers to tailor commission structures, potentially leading to fairer outcomes and better negotiation strategies. So, this helps you potentially get a higher selling price for your house.

Popularity

Points 2

Comments 4

What is this product?

This is a web-based calculator and explainer. It helps home sellers understand how commission structures work, specifically focusing on an alternative model called "Progressive Commissions." Instead of a flat percentage, the agent's commission increases more significantly as they achieve a higher selling price. The calculator allows sellers to experiment with different commission tiers and see how agent incentives change. The underlying technology likely involves HTML, CSS, and JavaScript for the user interface, and possibly a backend (like Python or Node.js) to handle calculations and data storage. The innovation is the user-friendly interface for exploring complex financial incentives. So, this helps you understand and create better commission deals.

How to use it?

Home sellers can use the calculator by inputting the estimated home value and desired commission structure. They can adjust the commission rates at different price tiers and immediately see how the agent's earnings are affected. This allows them to experiment with different incentive models and compare the potential payouts. The integration is simple: it's a website. You just visit it and start playing with the numbers. So, this lets you see the impact of different commission strategies before you even talk to an agent.

Product Core Function

· Commission Calculation: The core function is to calculate the agent's commission based on the sale price and the user-defined progressive commission structure. This involves mathematical formulas to determine the commission at each price tier. This allows you to see exactly how much your agent will earn under different scenarios.

· Visualization: The calculator likely uses charts or graphs to visualize the relationship between sale price and agent commission, making it easy to understand the incentive structure. This helps you easily grasp the implications of each commission structure.

· Scenario Comparison: Users can compare different commission structures side-by-side to see how they affect the agent's payout at various price points. This provides valuable insights for negotiation. This helps you see which deal is better for you.

· User Interface: A clean and intuitive user interface that allows users to easily input data, adjust commission rates, and view the results. This ensures the project is accessible to non-technical users. This helps you play with the tool easily.

Product Usage Case

· Real Estate Negotiation: A homeowner can use the calculator to create a progressive commission structure that incentivizes their agent to strive for a higher selling price. This provides a concrete framework for negotiation. This helps you get a better deal when selling your house.

· Agent Comparison: Sellers can compare different commission models proposed by various agents, evaluating how each model incentivizes the agent's performance. This helps choose the right agent.

· Market Analysis: Real estate professionals could use the calculator to analyze how different commission structures could affect the competitiveness of their services in a given market. This gives agents the power to customize the deals.

· Educational Tool: The calculator serves as an educational tool, helping people understand the complexities of real estate commissions and the impact of different incentive models. This empowers you to know more about real estate deals and the market.

15

Devplan: AI-Powered Product Development Accelerator

Author

five9s

Description

Devplan is an AI tool designed to speed up software development by automating the planning and specification phases. It leverages a custom-built 'context engine' that analyzes code from GitHub and web resources to understand project context. This allows Devplan to generate detailed product requirement documents, user stories, and technical designs, providing effort estimates and structured coding prompts. The core innovation lies in its ability to seamlessly integrate with existing tools like Linear, Jira, and various AI coding assistants, creating a unified workflow for faster and more efficient product development.

Popularity

Points 6

Comments 0

What is this product?

Devplan is like a smart assistant for product development. It uses AI to understand your project by examining your code (from GitHub) and other online information. This understanding enables it to create detailed plans, user stories (describing what users need), and technical designs. Think of it as automating the tedious pre-coding work. It then gives you a rough estimate of how much effort and complexity each task will take. Devplan doesn't write the code itself (though it can generate structured prompts that help AI code generation tools), but it streamlines the process from idea to working code by handling the planning and specification aspects. So, instead of spending hours writing documents, you can focus on building.

How to use it?

Developers can use Devplan by providing a project context (e.g., a GitHub repository or a set of initial ideas). Devplan’s context engine analyzes this information and automatically generates project documentation, user stories, and coding prompts. These prompts are designed to be used with other AI coding tools. You can also integrate Devplan with project management tools like Linear and Jira to streamline the workflow, pushing generated documentation directly into your existing project management system. Essentially, it automates the often-overlooked planning phase. For example, let's say you have an idea for a new feature; Devplan will help you create a detailed roadmap, outline tasks, and prepare the coding instructions.

Product Core Function

· Context Engine: This is the brains of Devplan. It analyzes your code from GitHub and gathers information from the web to understand your project's context. So, this helps AI to not be 'dumb' but to be useful for your project. For developers, this means the AI understands your project's history and avoids generic answers, thus providing you better code suggestions.

· Automated Document Generation: Devplan automatically creates product requirement documents (PRDs), user stories, and technical designs. This saves developers a lot of time and effort in writing detailed specifications, and it provides a solid base for development.

· Effort and Complexity Estimation: It provides estimated effort and complexity for each user story, which helps with project planning and resource allocation. Developers can better gauge project scope and deadlines.

· Structured Coding Prompts: Devplan creates well-defined coding prompts for AI code generation tools. This means that you can use AI coding tools and your work will have more focus and reduce the need for rework, thereby helping developers to quickly translate requirements into code.

· Integration with Project Management Tools: It seamlessly integrates with project management tools like Linear and Jira, allowing for easy import of generated documents and tasks. The time wasted to copy and paste data from an AI is reduced.

Product Usage Case

· Planning a New Feature: A development team wants to build a new user authentication feature. They provide Devplan with context (e.g., their existing codebase). Devplan analyzes the code, understands the existing authentication flow, and generates detailed specifications and coding prompts for this feature. This helps developers save a lot of time from the start.

· Estimating Project Scope: A project manager needs to estimate the effort required to build a new dashboard. Devplan creates user stories and gives effort estimates for each story. So, the project manager can better plan resources and set more realistic deadlines.

· Accelerating Code Generation with AI: A developer uses Devplan to generate well-structured coding prompts for an AI coding assistant. The AI assistant uses these prompts to quickly generate code snippets for a new API endpoint. The developer can reduce the time taken in the project because of the well-crafted prompts.

16

Unwrap_or_AI: AI-Powered Error Handling in Rust

Author

NoodlesOfWrath

Description

This project introduces a Rust macro called `unwrap_or_AI` that attempts to automatically fix errors in your code. Instead of the standard `unwrap()` which immediately crashes your program when an error occurs, `unwrap_or_AI` leverages Artificial Intelligence to intelligently predict and substitute a reasonable value, aiming to keep your program running smoothly even when unexpected issues arise. This is a significant innovation in error handling, potentially reducing the frequency of application crashes and improving developer productivity.

Popularity

Points 4

Comments 2

What is this product?

This is a Rust macro that uses AI to handle errors. When your code encounters a situation where it would normally 'panic' (crash) due to an error like a missing file or an unexpected value, `unwrap_or_AI` steps in. It analyzes the surrounding code, comments, and input data, then asks an AI to guess the correct value to use, keeping your program alive. The key innovation lies in employing AI to intelligently address runtime errors, which could improve robustness and developer efficiency.

How to use it?

Developers use this by including the `unwrap_or_AI` macro in their Rust code, replacing instances of `unwrap()`. When an error occurs where `unwrap()` would have crashed, `unwrap_or_AI` calls the AI. The AI generated value will be injected into the execution to prevent crash. This integrates by simply replacing your existing `unwrap()` calls. So what? You can potentially handle more errors without your program crashing, letting you run your program longer and easier.

Product Core Function

· AI-Powered Error Prediction: The core functionality is the AI's ability to analyze context and guess the correct value when an error occurs. This value is predicted through deep learning and natural language processing. The value of this lies in its ability to mitigate program crashes caused by common errors like null pointers or file not found. This means a more robust and resilient system.

· Contextual Analysis: The macro inspects the surrounding code, including function signatures, comments, and input values, to provide the AI with the necessary context. This helps the AI make more accurate predictions. It is valuable because context analysis improves the likelihood that the AI will guess the correct value, making the program's error handling more effective.

· Seamless Integration: By replacing `unwrap()` with `unwrap_or_AI`, developers can easily integrate AI-driven error handling into their existing Rust projects. This offers developers a convenient and easy way to enhance the robustness of the system. The value is that it simplifies incorporating more intelligent error management without requiring significant code refactoring.

· Reduced Crashes and Improved Uptime: Because `unwrap_or_AI` attempts to recover from errors, it can significantly reduce the number of program crashes. This makes your application more reliable. It's valuable because it leads to increased uptime and better user experience.

Product Usage Case

· File Handling: When a program tries to read a file that doesn't exist, `unwrap_or_AI` could guess a default value, preventing the program from crashing. This is useful to recover from minor issues without breaking the application, providing more flexibility.

· Network Requests: If a network request times out, `unwrap_or_AI` could guess a default value or cached result, preventing an immediate failure. You could enhance the user experience by offering fallback mechanisms.

· Data Parsing: When parsing data from an external source and encountering an unexpected format, `unwrap_or_AI` could attempt to infer a suitable value, preventing a failure. The value is that it increases resilience when processing external data, ensuring that the application does not fail.

17

BirthText: Facebook Birthday Exporter for SMS Reminders

url

Author

samfeldman

Description

BirthText is a Chrome extension that allows you to export your Facebook friends' birthdays and receive SMS/WhatsApp reminders. This project focuses on simplifying the process of remembering birthdays by leveraging the ubiquitous nature of text messaging. It innovatively solves the problem of scattered birthday information by bridging the gap between Facebook and text-based reminders. It caters to users who prefer SMS or WhatsApp notifications over push notifications or calendar events, embracing a 'text-first' approach for simplicity and reliability. It’s built on the recognition that text messages are a common and personal form of communication.

Popularity

Points 5

Comments 0

What is this product?

BirthText is a Chrome extension that pulls birthday information from your Facebook friends' profiles. It then allows you to select which birthdays you want to import into birthdays.app, which sends SMS or WhatsApp reminders. The innovation lies in its ability to easily extract and convert Facebook birthday data into a format usable for text message reminders, thereby personalizing and streamlining the reminder process. It uses OAuth (a secure way of authorizing access) for Google Calendar sync. So what? You'll never forget a birthday again!

How to use it?

Install the Chrome extension, it pulls birthday information from Facebook. After selecting the friends whose birthdays you want to remember, you import them to birthdays.app using an import code provided by the birthdays.app web app. The app handles sending the text message reminders. So what? It's easy to use, and you will never miss a birthday again!

Product Core Function

· Facebook Birthday Extraction: The core function is extracting birthday information from Facebook profiles using a Chrome extension. This involves interacting with the Facebook website to scrape the necessary data and format it for the reminder service. The value: Easy data retrieval.

· Selective Import: The extension provides an option to select specific friends for import. The user is in charge. Value: User-friendly and efficient.

· Integration with birthdays.app: The extension interacts with the birthdays.app web app through an import code to seamlessly add selected birthdays to the reminder system. Value: Data flow!

· SMS/WhatsApp Reminder Generation: birthdays.app's backend then generates and sends SMS or WhatsApp messages to remind you of the birthdays. Value: Never miss a birthday again.

Product Usage Case

· Reminder-centric Social Groups: A user with a close group of friends on Facebook wants to keep in touch on birthdays and knows many people prefer SMS. BirthText is used to quickly pull the birthdays into a text reminder system, making sure no one is left out. So what? It strengthens social connections with easy text reminders.

· Simple Reminder System Preference: Someone prefers SMS reminders over calendar events and push notifications. By using BirthText, they avoid the complexity of other calendar or reminder systems and are able to get reminders on their most-used communication channel. So what? It's great if you want simple and direct reminders.

18

Honcho AI: Penny for Your Thoughts

Author

vvoruganti

Description

This project leverages a memory and reasoning engine (Honcho) to create an AI interviewer. Users can be interviewed by the AI and generate unique information, which other users can then pay to access through micro-transactions. This allows experts to monetize their knowledge, turning their expertise into a stream of income. The core innovation lies in the integration of an AI interviewer with a payment system, enabling a novel model for knowledge sharing and monetization.

Popularity

Points 4

Comments 1

What is this product?

This is a platform where you interact with an AI interviewer powered by the Honcho engine. The AI asks you questions to extract your expertise, then packages the extracted knowledge into a sellable format. Think of it as a marketplace for niche expertise. The innovation is in combining AI-powered knowledge extraction with a micro-transaction system, allowing users to directly profit from their knowledge. So what? This means you can get paid for the insights you already possess!

How to use it?

Developers can integrate with the Honcho AI through APIs, allowing them to embed AI-driven interview and monetization features into their own applications. They can build platforms for experts, create educational tools, or develop personalized knowledge bases. The API likely handles the interview process, knowledge extraction, and micro-transaction management. So what? You can build new revenue streams and create innovative learning platforms by tapping into expert knowledge.

Product Core Function

· AI Interviewing: The core functionality is the AI agent that conducts interviews, extracting specific and unique information from the user. The AI’s ability to understand context and ask relevant questions is crucial. So what? This allows for efficient knowledge capture that might be difficult or time-consuming with traditional methods.

· Knowledge Structuring: The project probably involves processing the interview responses and organizing the information into a readily consumable format, such as text summaries or question-and-answer pairs. So what? This makes the extracted knowledge easily accessible and valuable to paying users.

· Micro-Transaction System: A built-in payment system allows users to pay small amounts to access specific pieces of information extracted from the interview. So what? This opens up new revenue models for experts and offers a way to get specific answers without investing in expensive consultations or research.